***Disclaimer***

This is a very preliminary investigation on my part. I'm not going to move on these results in any production system at least until I've also tested effects on checkpoint for persistent databases. Probably not until I understand more about the mechanics of this throttle, either. So I plead that no-one incorporates -k into their own system based on this brief write-up... you'll need some extensive testing for your own context.

***************

Months ago, Jimmy May (@aspiringgeek on Twitter) asked me if I'd used the SQL Server -k startup option. He's really knowledgeable about interaction of SQL Server with storage (an understatement) and a thorough tester - he's seen some good results with -k. I'd read about this startup option, but never tested it and hadn't seen it deployed on any systems I'd worked with. What I'd read to that point had to do with checkpoint throttling. Details on that angle of -k startup option can be found in kba 929240 below.

FIX: I/O requests that are generated by the checkpoint process may cause I/O bottlenecks if the I/O subsystem is not fast enough to sustain the IO requests in SQL Server 2005

https://support.microsoft.com/en-us/help/929240

Now the systems I work on stress SQL Server in lots of corner-case ways 😁 but an overwhelming checkpoint is something I haven't yet observed.

On the other hand, I do see overwhelming tempdb spills. Tempdb sort/hash spill writes are some of the most aggressive writes to come out of SQL Server. Systems susceptible to them are advised to consider how to mitigate performance risk to that very system, as well as mitigating the risk of becoming a noisy neighbor if shared storage or shared plumbing (ESXi server, top-of-rack switch, etc) is involved.

The most common performance interventions for tempdb - trace flag 1117 or equivalent, trace flag 1118 or equivalent, increasing data file count to reduce allocation page contention - do not mitigate the risk posed by a tempdb spill write flood. In fact, since none of the resources for those interventions I am aware of address the underlying Windows volume, vHBA, or ESXi host LUN layout for tempdb there is a chance of actions taken to alleviate allocation page contention increasing the risk posed by tempdb spills. More on that another day - io weaving is a topic I'll have to prepare some diagrams for 😁

Most disk IO throttles are a poor fit for trying to mitigate this risk also. VMware provides SIOC and adaptive queue throttling if using vmdks. Neither work well to tame tempdb write floods without also throttling access to persistent databases. Many storage arrays provide QoS controls at their front end adapters for rate limiting by IOPs or bytes/sec. These limits can apply per initiator (host hadapter) or per target LUN depending on the array model. Per LUN QoS can be ok... but also unwieldy. What about IO governance in Resource Governor? It works per volume!! Yay! But its share-based - rather than limit-based - and will kick in under contention only. So... nope, not that either (but do keep in mind that RG IO governance works per Windows volume - I'll come back to that someday and how it fits into my recommendation NOT to co-locate data files for tempdb and persistent databases on the same Windows volume😉).

***Same Day Update***

Thanks to Alex Friedman (@alexf0101 on twitter) for pointing out I was wrong about Resource Governor IO governance. It is limit-based governance. I'll have to give it a try 😀

IO Resource Governance in SQL Server 2014

https://blogs.technet.microsoft.com/dataplatforminsider/2013/11/07/io-resource-governance-in-sql-server-2014/

Use SQL Server 2014 Resource Governor to Throttle Processes for IO Usage

https://www.mssqltips.com/sqlservertip/3370/use-sql-server-2014-resource-governor-to-throttle-processes-for-io-usage/

******

But here's something tantalizing. A kba about -k startup option initially written for SQL Server 2012. Hmm. It mentions throttling tempdb "work files". Gives an example with checkdb.

Enable the "-k" startup parameter to control the rate that work files can spill to tempdb for SQL Server

https://support.microsoft.com/en-us/help/3133055/enable-the--k-startup-parameter-to-control-the-rate-that-work-files-can-spill-to-tempdb-for-sql-server

Recall that I am using a create index statement with sort_in_tempdb as my proxy for simulating large sort/hash spills. You can see my initial work with that here.

tempdb: "insert into... select" vs "select... into" vs index sort_in_tempdb write behavior

http://sql-sasquatch.blogspot.com/2017/03/tempdb-index-sortintempdb-vs-select.html

But what does throttling "work files" mean? Is the throttle selectively restricted to tempdb activity only? What about temp table usage (that's not my focus for a while - but its an important question). What happens to "create index" with sort_in_tempdb if the -k option is enabled? I'm glad you asked about "create index"!!

Let's start with the unthrottled create index, on an 8 vcpu VM with all flash storage. Its SQL Server 2016 SP1. Maxing out two ports of the adapter 😀 Check out that write latency on the right! Whoo boy! Years ago Paul Randall asked his readers to send in disk service time numbers from their SQL Server instances. Tempdb write latencies were curiously high. I know one possible reason 😀

These graphs are from 1 second perfmon captures.

So... both to keep from saturating the adapter (and punishing any activity sharing the adapter with very high service times) AND to keep from overwhelming storage with writes, might want to throttle that activity.

What happens if we add -k1200 to startup? Interesting. The entire operation stretched out in elapsed time by about 5 seconds. Bytes/sec didn't exceed 1500 mb. But look at that difference in write latency!! A single point of stress at about 140 ms, and everything else was nice and low. Remember - at saturation, service times get punished. Keep a resource from saturation - even just by a little bit - and you can be rewarded with much low service times.

But... maybe the -k didn't really do anything? After all - it didn;t *really* throttle to 1200 mb/sec did it? And... this is right around a 10% difference in write bytes/sec to tempdb. Within variance for some type of tests. I don't have the patience today to keep repeating this test, though 😜 So, lets try to answer that question with a different throttle value. Onward to 800!

OK... that's enough for me to say -k is really doing something. Even lower bytes/sec to tempdb. Lower peak write latency. Still a nasty peak, but very brief.

What about throttled to 400? Hey... this one looks really nice from the write service time perspective. Interesting that tempdb write bytes/sec is as variable as it is - something to do with the mechanics of the throttle. A lot of this stuff might be more even on a physical server than in a VM.

Although I don't have time to do multiple repeat tests, I always try to make time to not stop at the first result I like. So let's go to 200!!!

Excellent! The first result I dislike! Write latency stays super low except for the peak at the end. Write bytes/sec is reasonable with an interesting spike. (I hope to learn more about how this throttle actually works - that'll help me plan how to work it into best practices and/or performance interventions.) But the reason I don't like this result is the really choppy CPU utilization. That hints that the throttle mechanism itself is under stress. So for this workload on this system, throttling to 200 is too aggressive a throttle.

So where would I go from here? When its time for more testing, I'll recommend ten or more runs of unthrottled, 1200, 800, 400. From the best results, ten or more runs at that level plus 100, and minus 100. Then we'll see where we're at. Till then...

*whispers* smash your axioms

Friday, March 31, 2017

Wednesday, March 29, 2017

#sqlserver tempdb: "insert into... select" vs "select... into" vs index sort_in_tempdb write behavior

I believe pretty strongly that a collection of best practices should keep workload in mind. Form fit to function, if you will.

So when it comes to tempdb best practices, I like to remind folks that not all use of tempdb looks the same from the system side.

On the systems I work with, sort/hash spill is the main component of tempdb use. On many OLTP systems, rapid create/drop of temp tables is a much more prominent factor in tempdb considerations. On some systems, row versioning or perhaps use of large local or global temp tables is a significant consideration.

Two concerns arise from the various uses of tempdb:

Today I want to turn to the question of how some tempdb activity engages with storage.

Sort and hash spill is my primary concern, but today I'm going to weasel out by using CREATE INDEX with the sort_in_tempdb option as a proxy for sort spill. At a later time (once my other tests behave a bit more) I'll come back to this and compare sort and hash spills to sort_in_tempdb activity.

The system I'm using is an 8 vcpu VM, set up with storage from an all flash array. SQL Server 2016 SP1.

Here's the setup for my test.

Crude, but effective. You can probably tell that I was initially working with a table 3 times as large and scaled it back :-) The large string in each row forces 1 row per page, giving a fairly predictable table size when the table is not compressed.

For the first test scenario, lets create a temp table, and insert all rows into it.

OK, now lets use a "SELECT... INTO" query.

Finally, lets use "CREATE INDEX" with sort_in_tempdb.

Now... lets see how that all turned out. 1 second perfmon captures below.

Interesting that the "select... into" took a little longer, isn't it :-) But the two temp table operations were close to each other in write bytes/sec to tempdb. Look how aggressive that "create index" was though! Maxed out adapter bandwidth.

Let's look at writes/sec rather than write bytes/sec.

Whoa. The "select... into" in the middle issued wwwaaaayyyy more writes, and way more writes/sec to tempdb than either of the other operations. But... each operation wrote the same amount to tempdb. So the "select... into" was using some pretty small writes in comparison.

Based on the number of writes, the fact that the "select... into" showed higher disk queue length than the first table option isn't too surprising. But... maybe its a little surprising that the "create index" has such high queue length, considering the much lower number of writes/second?

But if we look at sec/write... aha! The table insert and "select... into" operations have write latency staying well below 64ms. Its a little surprising to me that the "insert into" had higher peak write latency than the "select into..." That may certainly be an oddity of that particular test run. But I don't have a long enough attention span to repeat the test 10 or more times to even it out :-) Nah, the real surpise is the write latency over 300 ms in the "create index". On flash storage, too.

So... different uses of tempdb stress storage in different ways. If I'm correct that "create index" is a good proxy for hash/sort spills, then tempdb spills require special consideration in how to destage aggressive write bytes/sec.

And... a theme I'll come back to in the future... relevant to the high write latency experienced by the "Create index"...

strange things happen to service time at saturation.

So when it comes to tempdb best practices, I like to remind folks that not all use of tempdb looks the same from the system side.

On the systems I work with, sort/hash spill is the main component of tempdb use. On many OLTP systems, rapid create/drop of temp tables is a much more prominent factor in tempdb considerations. On some systems, row versioning or perhaps use of large local or global temp tables is a significant consideration.

Two concerns arise from the various uses of tempdb:

- do they all stress allocation pages in the same way? Tempdb allocation page contention and resulting pagelatch waits are the classic drivers of increasing the tempdb data file count for a given workload.

- do they all utilize the storage subsystem in the same way? This is another, separate consideration in tempdb design which may influence decisions about which RAID level or disk group to put tempdb on, whether to use flash or HDD, how many Windows volumes to use, etc.

Today I want to turn to the question of how some tempdb activity engages with storage.

Sort and hash spill is my primary concern, but today I'm going to weasel out by using CREATE INDEX with the sort_in_tempdb option as a proxy for sort spill. At a later time (once my other tests behave a bit more) I'll come back to this and compare sort and hash spills to sort_in_tempdb activity.

The system I'm using is an 8 vcpu VM, set up with storage from an all flash array. SQL Server 2016 SP1.

Here's the setup for my test.

/* test setup */ create table test_small (num1 BIGINT, string VARCHAR(4000), num2 BIGINT, constraint pk_test_small PRIMARY KEY CLUSTERED (num1)) WITH (data_compression = page); DECLARE @line VARCHAR(80) = ' 12345678901234567890123456789012345678901234567890123456789012345678901234567890'; DECLARE @string VARCHAR(4000) = @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line + @line; ;WITH L0 AS (SELECT 1 AS c UNION ALL SELECT 1), -- 2 L1 AS (SELECT 1 AS c FROM L0 A CROSS JOIN L0), -- 4 L2 AS (SELECT 1 AS c FROM L1 A CROSS JOIN L1), -- 16 L3 AS (SELECT 1 AS c FROM L2 A CROSS JOIN L2), -- 256 L4 AS (SELECT 1 AS c FROM L3 A CROSS JOIN L3), -- 65536 L5 AS (SELECT 1 AS c FROM L4 A CROSS JOIN L4), -- 4294967296 L_FINAL AS (SELECT TOP (16777216) ROW_NUMBER() OVER (ORDER BY (SELECT NULL)) N FROM L5 ) INSERT INTO test_small SELECT N, @string, N FROM L_FINAL WHERE N % 3 = 0

Crude, but effective. You can probably tell that I was initially working with a table 3 times as large and scaled it back :-) The large string in each row forces 1 row per page, giving a fairly predictable table size when the table is not compressed.

For the first test scenario, lets create a temp table, and insert all rows into it.

/* scenario 1 */ create table #test_small_1 (num1 BIGINT, string VARCHAR(4000), num2 BIGINT); INSERT INTO #test_small_1 SELECT * FROM test_small;

OK, now lets use a "SELECT... INTO" query.

/* scenario 2 */ SELECT * INTO #test_small_2 FROM test_small;

Finally, lets use "CREATE INDEX" with sort_in_tempdb.

/* scenario 3 */ CREATE INDEX nci_small_test ON test_small(Num2) INCLUDE (String, Num1) WITH (SORT_IN_TEMPDB = ON, ONLINE = OFF, maxdop = 4);

Now... lets see how that all turned out. 1 second perfmon captures below.

Interesting that the "select... into" took a little longer, isn't it :-) But the two temp table operations were close to each other in write bytes/sec to tempdb. Look how aggressive that "create index" was though! Maxed out adapter bandwidth.

Let's look at writes/sec rather than write bytes/sec.

Whoa. The "select... into" in the middle issued wwwaaaayyyy more writes, and way more writes/sec to tempdb than either of the other operations. But... each operation wrote the same amount to tempdb. So the "select... into" was using some pretty small writes in comparison.

Based on the number of writes, the fact that the "select... into" showed higher disk queue length than the first table option isn't too surprising. But... maybe its a little surprising that the "create index" has such high queue length, considering the much lower number of writes/second?

But if we look at sec/write... aha! The table insert and "select... into" operations have write latency staying well below 64ms. Its a little surprising to me that the "insert into" had higher peak write latency than the "select into..." That may certainly be an oddity of that particular test run. But I don't have a long enough attention span to repeat the test 10 or more times to even it out :-) Nah, the real surpise is the write latency over 300 ms in the "create index". On flash storage, too.

So... different uses of tempdb stress storage in different ways. If I'm correct that "create index" is a good proxy for hash/sort spills, then tempdb spills require special consideration in how to destage aggressive write bytes/sec.

And... a theme I'll come back to in the future... relevant to the high write latency experienced by the "Create index"...

strange things happen to service time at saturation.

Tuesday, March 28, 2017

SQL Server Query Store Plan XML with more than 128 levels of nesting - Part II

Remember this?

SQL Server Query Store Plan XML with more than 128 levels of nesting - Part I

http://sql-sasquatch.blogspot.com/2017/03/sql-server-query-store-plan-xml-with.html

Here I am getting into trouble again.

One of the reasons I'm digging into query store is to round up queries timing out in the optimizer. Hoping to find ways for them to avoid that timeout and experience higher quality plans.

In theory this query would allow me to find the first query plan with plan_id higher than 53100 that experienced an optimizer timeout.

Oops. Got at least one of these between 53100 and the first plan_id to satisfy the query.

Identifying all of the highly nested plans, moving them to separate tables and removing the plans and runtime stats from query store would let me squeak by. But I don't like moving a problem from one table to another.

Y'know, looking at the plan XML below it strikes me most of the nested levels in a highly nested plan will be in the RelOp node.

Well... what if I cut off the first part of the XML - up to RelOp - and cut off the end, from the start of ParameterList. Those two pieces could be stitched together into well-formed XML, and without all of the operatores it should certainly fit within the 128 max nest levels.

Yep. I tried and it works :-)

SQL Server Query Store Plan XML with more than 128 levels of nesting - Part I

http://sql-sasquatch.blogspot.com/2017/03/sql-server-query-store-plan-xml-with.html

Here I am getting into trouble again.

One of the reasons I'm digging into query store is to round up queries timing out in the optimizer. Hoping to find ways for them to avoid that timeout and experience higher quality plans.

In theory this query would allow me to find the first query plan with plan_id higher than 53100 that experienced an optimizer timeout.

WITH XMLNAMESPACES('http://schemas.microsoft.com/sqlserver/2004/07/showplan' AS p) SELECT TOP (1) qp.plan_id FROM (SELECT qsp.plan_id, qsq.query_hash, CONVERT(XML, query_plan) AS qplan FROM sys.query_store_plan qsp JOIN sys.query_store_query qsq on qsq.query_id = qsp.query_id ) AS qp WHERE qp.qplan.exist('//p:StmtSimple/@StatementOptmEarlyAbortReason[.="TimeOut"]') = 1 AND qp.plan_id > 53100 ORDER BY qp.plan_id ASC OPTION (MAXDOP 1);

Oops. Got at least one of these between 53100 and the first plan_id to satisfy the query.

Identifying all of the highly nested plans, moving them to separate tables and removing the plans and runtime stats from query store would let me squeak by. But I don't like moving a problem from one table to another.

Y'know, looking at the plan XML below it strikes me most of the nested levels in a highly nested plan will be in the RelOp node.

Well... what if I cut off the first part of the XML - up to RelOp - and cut off the end, from the start of ParameterList. Those two pieces could be stitched together into well-formed XML, and without all of the operatores it should certainly fit within the 128 max nest levels.

Yep. I tried and it works :-)

;WITH XMLNAMESPACES('http://schemas.microsoft.com/sqlserver/2004/07/showplan' AS p) SELECT TOP (1) qp.plan_id FROM (SELECT qsp.plan_id, qsq.query_hash, CASE WHEN PATINDEX('%<RelOp NodeId="0"%', query_plan) > 0 THEN CONVERT(XML, SUBSTRING(query_plan, 1, PATINDEX('%<RelOp NodeId="0"%', query_plan) - 1) + SUBSTRING(query_plan, PATINDEX('%</QueryPlan>%', query_plan), LEN(query_plan) + 1) ) ELSE CONVERT(XML, query_plan) END AS qplan FROM sys.query_store_plan qsp JOIN sys.query_store_query qsq on qsq.query_id = qsp.query_id ) AS qp WHERE qp.qplan.exist('//p:StmtSimple/@StatementOptmEarlyAbortReason[.="TimeOut"]') = 1 AND qp.plan_id > 53100 ORDER BY qp.plan_id ASC OPTION (MAXDOP 1);

SQL Server Query Store Plan XML with more than 128 levels of nesting - Part I

I'm trying to analyze and categorize thousands of queries from the Query Store.

Wouldn't ya know it!! Some of my query plans exceed the 128 maximum levels of nesting allowed for the XML data type.

That results in errors when trying to convert the query store plan to XML. I want to convert the plans to XML in my queries in order to use exist(), value(), query() and nodes() xquery methods.

So I wrote a cursor to identify the plan_ids whose accompanying query plan XML had more than 128 levels of nesting.

In this SQL Server 2016 instance, trace flag 3226 is enabled globally - allowing me to use it as part of my check that convert to XML didn't result in an error.

My results:

OK... now I can identify the plans that have too many levels of nesting there's a good chance I can do something with them to circumvent the errors.

Yesterday I created auxiliary tables - one based on sys.query_store_plan and one based on sys.query_store_runtime_stats. I moved the identified plans and associated runtime stats to the auxiliary tables then used sp_query_store_remove_plan to remove the plan and its runtime stats from query store. But I've got a better idea I'll share soon...

*****

Wouldn't ya know it!! Some of my query plans exceed the 128 maximum levels of nesting allowed for the XML data type.

That results in errors when trying to convert the query store plan to XML. I want to convert the plans to XML in my queries in order to use exist(), value(), query() and nodes() xquery methods.

So I wrote a cursor to identify the plan_ids whose accompanying query plan XML had more than 128 levels of nesting.

In this SQL Server 2016 instance, trace flag 3226 is enabled globally - allowing me to use it as part of my check that convert to XML didn't result in an error.

SET NOCOUNT ON; DECLARE @error_msg NVARCHAR(256); DECLARE @plan_id BIGINT; DECLARE plan_cursor CURSOR FOR SELECT plan_id FROM sys.query_store_plan ORDER BY plan_id DESC; OPEN plan_cursor; FETCH NEXT FROM plan_cursor INTO @plan_id; WHILE @@FETCH_STATUS = 0 BEGIN BEGIN TRY ;WITH XMLNAMESPACES('http://schemas.microsoft.com/sqlserver/2004/07/showplan' AS p) SELECT 1 FROM ( SELECT CONVERT(XML, query_plan) qplan FROM sys.query_store_plan where plan_id = @plan_id) subQ WHERE subQ.qplan.exist('//p:TraceFlag/@Value[. = "3226"]') = 0 END TRY BEGIN CATCH SET @error_msg = error_message() PRINT CONVERT(NVARCHAR(32), @plan_id) + '-' + @error_msg END CATCH FETCH NEXT FROM plan_cursor INTO @plan_id END CLOSE plan_cursor; DEALLOCATE plan_cursor;

My results:

OK... now I can identify the plans that have too many levels of nesting there's a good chance I can do something with them to circumvent the errors.

Yesterday I created auxiliary tables - one based on sys.query_store_plan and one based on sys.query_store_runtime_stats. I moved the identified plans and associated runtime stats to the auxiliary tables then used sp_query_store_remove_plan to remove the plan and its runtime stats from query store. But I've got a better idea I'll share soon...

*****

SQL Server Query Store Plan XML with more than 128 levels of nesting - Part II

Monday, March 27, 2017

A Heap o' thoughts about VMware "Latency Sensitivity = High" & SQL Server

i was really glad to read this paper from the folks at VMware.

SQL Server VM Performance with VMware vSphere 6.5

https://blogs.vmware.com/performance/2017/03/sql-server-performance-vmware-vsphere-6-5.html

i'm glad they included latency sensitivity in the testing. but the testing methodology limits the usefulness of the conclusion for me.

First a matter of system setup: the max configuration of vcpu count = physical core count seems to have been used.

While certainly better than vcpu count greater than core count (avoiding competition among vcpus for time on core) some core time for nonvcpu worlds and for the hypervisor is unavoidable. That means competition for time-on-core for some of that VM's vcpus.

To neutralize that effect, keeping vcpu count *lower* than core count is expected where consistent peak performance per core is desired.

That's a somewhat similar strategy to reserving vcpu0 in a SQL Server guest for the txlog writer, for example.

Performance per core is an important perspective for SQL Server & Oracle database, because license cost per physical core or vcpu is typically one of the largest individual line items in system cost (if not the largest).

Keeping vcpu count below core count is likely to be even more important for peak performance as cores per socket continues to rise.

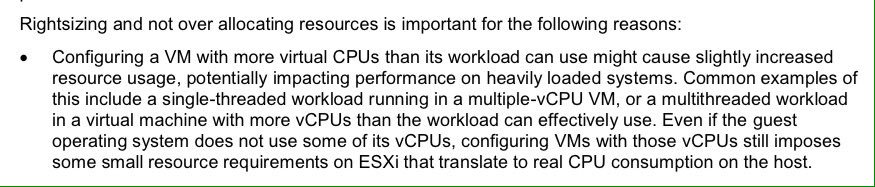

Consider this passage from the recently updated VMware SQL Server Best Practices Guide.

http://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/solutions/sql-server-on-vmware-best-practices-guide.pdf

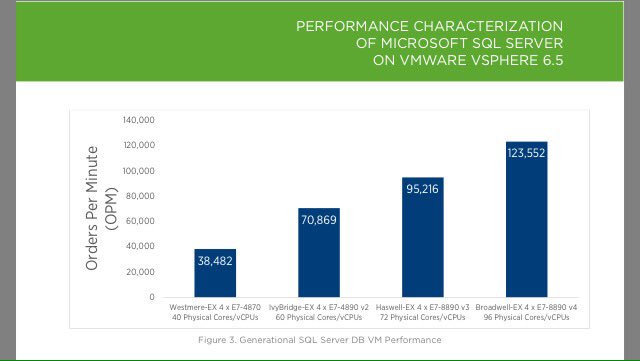

Here are some of the results included in the report.

Let's break that down into Orders per Minute (OPM) per physical core.

E7-4870: 962 E7-8890v2: 1181 E7-8890v3: 1322 E7-8890v4: 1287

So there was a drop - a small one - in OPM per physical core between v3 and v4.

In addition to max transactions/sec scale achieved at vcpu = core count on each platform, I'd be interested in comparison for equal vcpu count across platforms.

40 vcpu vm comparison on E7-4870, E7-8890v2, E78890v3, E78890v4.

60 vcpu vm comparison on E7-8890v2, E7-8890v3, E7-8890v4.

72 vcpu vm comparison on E7-8890v3, E7-8890v4.

In fact, to allow room for the nonvcpu worlds, what I'd really like to see is:

36 vcpu vm comparison on E7-4870, E7-8890v2, E78890v3, E78890v4.

56 vcpu vm comparison on E7-8890v2, E7-8890v3, E7-8890v4.

68 vcpu vm comparison on E7-8890v3, E7-8890v4.

92 vcpu vm on E7-8890v4.

But, of course there's only so much time for testing, and only so much attention that can be given to reading test results. 😀 Max scale results are what the largest share of the audience would be interested in. Still - I want to share what makes testing - even thorough, careful testing like this - not directly applicable to some contexts. Mine in particular. 😀

In reality, right-sizing the vcpu count for a system involves more than reserving adequate remaining CPU resources for nonvcpu worlds and the hypervisor. Including more vcpu than is needed in a VM brings additional overhead into the vm - and the effect can be significant.

The first thing to consider is the NUMA node boundary. The reason NUMA exists is to allow platforms to continue to scale to increasing levels of compute and memory resources. NUMA gives preferential access from core to memory based on locality of the memory to the core. Cross the NUMA node boundary in a memory access, and memory bandwidth becomes a consideration as does the increase in memory latency. This is true as well for interprocess communication between threads on cores across the NUMA node boundary. If there's a 14 vcpu vm on a server with 2 twelve core per socket processors... that vm may well be better off with 12 vcpu within a single NUMA node.

Even within a single NUMA node, lowering the number of vcpu reduces scheduling overhead on the hypervisor. Using 10 vcpus within a NUMA node when 6 would suffice? Those extra vcpus are adding scheduling overhead.

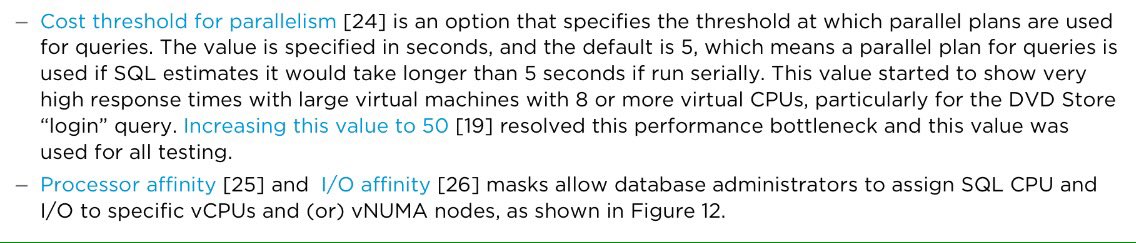

Now lets consider Cost Threshold for Parallelism (CTFP). Here's what appears on page 14 of the test results.

SQL Server VM Performance with VMware vSphere 6.5

https://blogs.vmware.com/performance/2017/03/sql-server-performance-vmware-vsphere-6-5.html

i'm glad they included latency sensitivity in the testing. but the testing methodology limits the usefulness of the conclusion for me.

First a matter of system setup: the max configuration of vcpu count = physical core count seems to have been used.

While certainly better than vcpu count greater than core count (avoiding competition among vcpus for time on core) some core time for nonvcpu worlds and for the hypervisor is unavoidable. That means competition for time-on-core for some of that VM's vcpus.

To neutralize that effect, keeping vcpu count *lower* than core count is expected where consistent peak performance per core is desired.

That's a somewhat similar strategy to reserving vcpu0 in a SQL Server guest for the txlog writer, for example.

Performance per core is an important perspective for SQL Server & Oracle database, because license cost per physical core or vcpu is typically one of the largest individual line items in system cost (if not the largest).

Keeping vcpu count below core count is likely to be even more important for peak performance as cores per socket continues to rise.

Consider this passage from the recently updated VMware SQL Server Best Practices Guide.

http://www.vmware.com/content/dam/digitalmarketing/vmware/en/pdf/solutions/sql-server-on-vmware-best-practices-guide.pdf

Here are some of the results included in the report.

Let's break that down into Orders per Minute (OPM) per physical core.

E7-4870: 962 E7-8890v2: 1181 E7-8890v3: 1322 E7-8890v4: 1287

So there was a drop - a small one - in OPM per physical core between v3 and v4.

In addition to max transactions/sec scale achieved at vcpu = core count on each platform, I'd be interested in comparison for equal vcpu count across platforms.

40 vcpu vm comparison on E7-4870, E7-8890v2, E78890v3, E78890v4.

60 vcpu vm comparison on E7-8890v2, E7-8890v3, E7-8890v4.

72 vcpu vm comparison on E7-8890v3, E7-8890v4.

In fact, to allow room for the nonvcpu worlds, what I'd really like to see is:

36 vcpu vm comparison on E7-4870, E7-8890v2, E78890v3, E78890v4.

56 vcpu vm comparison on E7-8890v2, E7-8890v3, E7-8890v4.

68 vcpu vm comparison on E7-8890v3, E7-8890v4.

92 vcpu vm on E7-8890v4.

But, of course there's only so much time for testing, and only so much attention that can be given to reading test results. 😀 Max scale results are what the largest share of the audience would be interested in. Still - I want to share what makes testing - even thorough, careful testing like this - not directly applicable to some contexts. Mine in particular. 😀

In reality, right-sizing the vcpu count for a system involves more than reserving adequate remaining CPU resources for nonvcpu worlds and the hypervisor. Including more vcpu than is needed in a VM brings additional overhead into the vm - and the effect can be significant.

The first thing to consider is the NUMA node boundary. The reason NUMA exists is to allow platforms to continue to scale to increasing levels of compute and memory resources. NUMA gives preferential access from core to memory based on locality of the memory to the core. Cross the NUMA node boundary in a memory access, and memory bandwidth becomes a consideration as does the increase in memory latency. This is true as well for interprocess communication between threads on cores across the NUMA node boundary. If there's a 14 vcpu vm on a server with 2 twelve core per socket processors... that vm may well be better off with 12 vcpu within a single NUMA node.

Even within a single NUMA node, lowering the number of vcpu reduces scheduling overhead on the hypervisor. Using 10 vcpus within a NUMA node when 6 would suffice? Those extra vcpus are adding scheduling overhead.

Now lets consider Cost Threshold for Parallelism (CTFP). Here's what appears on page 14 of the test results.

Tuesday, March 14, 2017

#TSQL2sday - The Daily WTF - A Tale of 2 Trace Flags

March 13, 2017 is T-SQL Tuesday! Yahoo!! Kennie Nybo Pontoppidan is hosting this month.

Here was the announce...

Announcing T-SQL Tuesday #88: The daily (database-related) WTF

http://www.pontop.dk/single-post/2017/03/07/Announcing-T-SQL-Tuesday-88-%E2%80%93-The-daily-database-related-WTF

And here is my contribution. A list of performance changes is much easier to come up with than the best order to introduce those changes.

Yep, I remember deploying a trace flag on a production server for the first time. Trace flag 8048, to mitigate spinlock contention related to memory allocation. That step followed weeks of observation and research, attempts to recreate the problem on a test system (unsuccessful at the time), reaching out to Microsoft and various experts.

Although it was a nail-biter, it went well. An important weekly batch of reports decreased in execution time from nearly 20 hours to just over 2 hours. Even more reassuringly, looking at graphs of CPU utilization vs read bytes/sec and CPU vs buffer page lookups, the relationships were clear again. As we increased and decreased demand on the server, we saw increases and decreases in CPU utilization. Good.

Soon after, though, on that system and numerous others, we ran into another significant issue limiting scalability and dragging out execution time of batch reports: very large memory grants and long lists of pending memory grants with excessively long resource_semaphore waits.

After significant additional research, we learned that trace flag 4199 had numerous positive effects on our workload. The easiest for us to demonstrate was the positive effect of the fix which could be independently enabled with trace flag 4101 - this fix was also rolled up into the trace flag 4199 fixes.

Here's a reference for that specific fix.

FIX: Reorder outer joins with filter criteria before non-selective joins and outer joins

https://support.microsoft.com/en-us/help/318530/fix-reorder-outer-joins-with-filter-criteria-before-non-selective-joins-and-outer-joins

And here's the posts where I've tried to keep track of all T4199 fixes.

Trace Flag 4199: Complex Risk Assessment Part I

http://sql-sasquatch.blogspot.com/2014/01/trace-flag-4199-complex-risk-assessment.html

Complex Risk Assessment: Part II Trace Flag 4199 fixes w/o individual trace flags & SQL Server 2016 Update

http://sql-sasquatch.blogspot.com/2014/01/trace-flag-4199-complex-risk-assessment_6.html

Trace flag 4199 was good for our workload. We tested it fully on SQL Server 2008R2, and would later replicate the testing on SQL Server 2012 and 2014.

I've long believed that knowing the list of performance recommendations is much easier than knowing the best order to adopt the recommendations. When I went on the road with my knowledge of perfmon, trace flag 8048, and trace flag 4199, strong support was given to that belief.

I left Wisconsin headed for Illinois with another performance engineer for an on-site observation and performance intervention.

For the first few hours we collected data with perfmon and some stored procedures to grab waits, spinlocks, and memory grant information. I was excited to see patterns I recognized - spinlock contention clustered around CMEMTHREAD waits (a hint that trace flag 8048 would be applicable) and "eyes too big for stomach" memory grant requests (in queries with many joins, a hint that trace flag 4199 may be helpful to "right-size" the memory grant).

I'd seen systems that implemented both trace flags as startup parameters simultaneously. I'd helped organizations implement first T8048, then T4199 (based on the timing of my research and testing of the trace flags). This was the first time that there was a desire to implement the trace flags one-at-a-time and we had the choice of which to implement first.

I hadn't chosen to put T8048 in first previously - that was just the way everything worked out. If I chose to follow that order - I'd be doing what I'd seen and done before. But... there was also a reason to choose the reverse order, with T4199 first. Spinlock issues - especially at that time - were considered more exotic performance issues than many of the "plan-shaping" issues that trace flag 4199 addressed. Many administrators were much more familiar with that type of performance issue - eliminating significant waits, altering plan shapes, making the logical work of queries more efficient - than with the busy wait/management overhead of spinlocks. Sometimes demonstrating an improvement that someone is already familiar with evaluating is a plus, helping to gain trust. I didn't know of a specific reason NOT to put trace flag T4199 in place, followed by T8048 later. And in this case it seemed like building up some interpersonal capital might be a good idea.

Trace flag 4199 went in as a service startup parameter, and SQL Server was restarted. When the heavy workload hit, we watched resource utilization closely. Things looked good for a few minutes. Then the server became unresponsive. CPU was saturated.

Trace flag 4199 had made logical work able to try harder. Individual memory grants had shrunk, so query concurrency was higher. The number of concurrent parallel workers was higher. The opportunities for contention in allocation of workspace memory for sorts and hashes increased significantly. And spinlock contention resulted in CPU saturation. There's a condition known as a spinlock convoy - many concurrent threads just trading a spinlock resource among themselves, with all of those not holding the resource spinning. I'd read about that, but never seen it make a server unresponsive until that day.

Fortunately the workload was able to be resubmitted. I sheepishly explained that the server becoming unresponsive was actually a sign that the trace flag was doing what it was supposed to do... but also meant I had chosen the wrong order to enable the trace flags. The server was brought down. When it was brought back up, we switched in trace flag 8048. The workload was resubmitted, and a modest gain was observed. The next day, trace flag 4199 was added and a significant performance and scalability gain was realized.

And I learned to consider much more closely the consequences of the order of performance intervention. That's the only time I can remember trying to explain that the unresponsive server being witnessed by a whole room full of people was actually a good sign. I'm sure there were at least a couple of people in that room thinking "performance intervention, WTF?"

Monday, March 13, 2017

perfmon binary blg files - they're doggone big! an example of relog

At SQLSaturday 600 in Chicago this past weekend (March 11, 2017) I showed that perfmon logged to a binary log file can be much larger than alternative formats like csv.

(My presentation can be found here:

http://www.sqlsaturday.com/600/Sessions/Details.aspx?sid=59564)

Here's ~72 minutes of activity logged in 1 second increments, captured in both a binary blg file and a flat file csv format. 553 columns including the timestamp.

That's more than a 6 times difference in size!

For that reason *if* I use a binary log file at all, I put in a limited set of counters. This is a typical counter set that I may use when capturing perfmon in a blg binary file.

There's only a few reasons I personally use a binary file for logging perfmon, anyway. One is a timing issue. If logman is used to start logging perfmon, and the data collector is started *before* the SQL Server service is started, the only format that can capture the SQL Server specific counters like the list above: blg. That can be a pain in the neck when trying to capture perfmon on a Windows cluster, or around a maintenance window where server restart is expected.

Binary format is kinda nice because as soon as its opened it opens in a graphing interface.

But with a long counter list... that's just a mess.

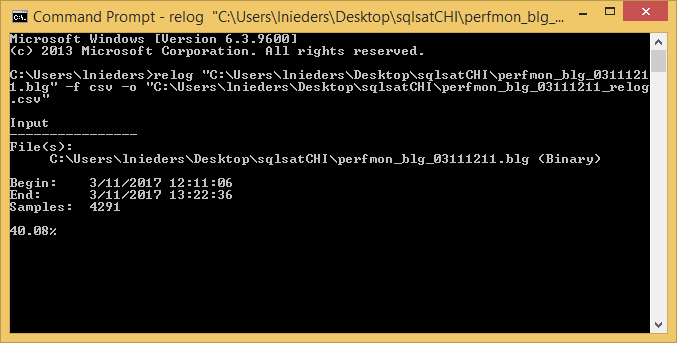

This is the relog in a cmd.exe while its in flight.

And when its complete...

Here's the results of the relog.

So the csv from relogging the blg is still larger than the csv that was captured in csv format. But way smaller than the original blg itself.

And once its in csv format, I can make all of my crazy graphs in Excel 😊

Here's a reference for logman to capture perfmon logs.

https://technet.microsoft.com/en-us/library/bb490956.aspx

And a reference for relog if you want to change log format, narrow the time window, pull out a subset of counters, etc.

https://technet.microsoft.com/en-us/library/bb490958.aspx

(My presentation can be found here:

http://www.sqlsaturday.com/600/Sessions/Details.aspx?sid=59564)

Here's ~72 minutes of activity logged in 1 second increments, captured in both a binary blg file and a flat file csv format. 553 columns including the timestamp.

That's more than a 6 times difference in size!

For that reason *if* I use a binary log file at all, I put in a limited set of counters. This is a typical counter set that I may use when capturing perfmon in a blg binary file.

\SQLServer:Access Methods\Full Scans/sec \SQLServer:Access Methods\Index Searches/sec \SQLServer:Access Methods\Page Splits/sec \SQLServer:Access Methods\Range Scans/sec \SQLServer:Backup Device\* \SQLServer:Buffer Manager\* \SQLServer:Buffer Node(*)\* \SQLServer:Databases(_Total)\Backup/Restore Throughput/sec \SQLServer:Databases(_Total)\Bulk Copy Rows/sec \SQLServer:Databases(_Total)\Bulk Copy Throughput/sec \SQLServer:Databases(_Total)\DBCC Logical Scan Bytes/sec \SQLServer:Databases(_Total)\Log Bytes Flushed/sec \SQLServer:Databases(_Total)\Log Flushes/sec \SQLServer:Databases(tempdb)\Data File(s) Size (KB) \SQLServer:General Statistics\Logins/sec \SQLServer:General Statistics\User Connections \SQLServer:Locks(*)\Lock Requests/sec \SQLServer:Locks(*)\Lock Waits/sec \SQLServer:Memory Manager\* \SQLServer:Memory Node(*)\* \SQLServer:Plan Cache(*)\Cache Pages \SQLServer:Resource Pool Stats(*)\* \SQLServer:SQL Statistics\Batch Requests/sec \SQLServer:SQL Statistics\SQL Compilations/sec \SQLServer:SQL Statistics\SQL Re-Compilations/sec \SQLServer:Transactions\Free Space in tempdb (KB) \SQLServer:Wait Statistics(*)\* \SQLServer:Workload Group Stats(*)\*

There's only a few reasons I personally use a binary file for logging perfmon, anyway. One is a timing issue. If logman is used to start logging perfmon, and the data collector is started *before* the SQL Server service is started, the only format that can capture the SQL Server specific counters like the list above: blg. That can be a pain in the neck when trying to capture perfmon on a Windows cluster, or around a maintenance window where server restart is expected.

Binary format is kinda nice because as soon as its opened it opens in a graphing interface.

But with a long counter list... that's just a mess.

I do a lot of work in Excel. Years ago I used tsv format mainly for logging perfmon. But then somewhere along the line, Excel stopped recognizing tsv as a native filetype for itself. I messed around changing associations for a while... ending up giving up and using csv format from then on.

So if I do get a large blg binary format perfmon capture, I relog it. Like this...

relog "C:\Users\lnieders\Desktop\sqlsatCHI\perfmon_blg_03111211.blg" -f csv -o "C:\Users\lnieders\Desktop\sqlsatCHI\perfmon_blg_03111211_relog.csv"

This is the relog in a cmd.exe while its in flight.

And when its complete...

Here's the results of the relog.

So the csv from relogging the blg is still larger than the csv that was captured in csv format. But way smaller than the original blg itself.

And once its in csv format, I can make all of my crazy graphs in Excel 😊

Here's a reference for logman to capture perfmon logs.

https://technet.microsoft.com/en-us/library/bb490956.aspx

And a reference for relog if you want to change log format, narrow the time window, pull out a subset of counters, etc.

https://technet.microsoft.com/en-us/library/bb490958.aspx

Thursday, March 9, 2017

Some tools I use in blogging...

***** 2017 March 9 *****

I go here all the time to keep SQL Server builds and versions straight.

https://sqlserverbuilds.blogspot.com/

I use hilite.me for HTML code formatting. (I like SQL language, vs style.)

http://hilite.me/

**********

I go here all the time to keep SQL Server builds and versions straight.

https://sqlserverbuilds.blogspot.com/

I use hilite.me for HTML code formatting. (I like SQL language, vs style.)

http://hilite.me/

**********

SQL Server - Who's got a lock and how long've they had it?

SQL Server 2016 SP1 for the adventure below.

A question came up this morning on Twitter #sqlhelp about capturing all activity against a given SQL Server table for a period of time. The goal was to identify what was taking out a lock on a table and taking its sweet time before releasing it 😀

Extended Events and SQL Trace are options to capture all of this activity. But the observer overhead is something to consider.

Depending on whether observer overhead is a risk and whether transactions span SQL requests (and thus might prevent identifying the SQL text responsible for the lock request), polling at a given interval such as 1 or 5 minutes with a query like that below might also fit the bill.

Session 1

Create the table, grab table lock.

Session 2

Identify the locks

Results of the query while the batch which created the table is still executing (during the waitfor).

Results of the query after the batch has completed - no request so no sql_handle => no SQL text returned. But the lock is still held.

Polling for locks every minute or so and storing results in a table can be much more lightweight than a trace or Extended events. However - not guaranteed to capture the SQL text which was responsible for creating the lock if the transaction spans batches/requests. So that's the trade-off.

A reminder to myself to clean up after myself.

A question came up this morning on Twitter #sqlhelp about capturing all activity against a given SQL Server table for a period of time. The goal was to identify what was taking out a lock on a table and taking its sweet time before releasing it 😀

Extended Events and SQL Trace are options to capture all of this activity. But the observer overhead is something to consider.

Depending on whether observer overhead is a risk and whether transactions span SQL requests (and thus might prevent identifying the SQL text responsible for the lock request), polling at a given interval such as 1 or 5 minutes with a query like that below might also fit the bill.

Session 1

Create the table, grab table lock.

drop table if exists sqL_handLe; begin tran create table sqL_handLe (pk bigint); waitfor delay '00:00:30';

Session 2

Identify the locks

select so.name as [lock_object_name], tl.resource_associated_entity_id as [lock_object_id], tl.resource_database_id, st.session_id, dt.database_transaction_begin_time, --dt.database_transaction_log_record_count, --dt.database_transaction_log_bytes_used, --dt.database_transaction_log_bytes_reserved, tl.request_mode, tl.request_status, tl.resource_type, --tl.resource_subtype, --tl.resource_description, --tl.resource_lock_partition, sqlT.text as request_sql_text from sys.dm_tran_locks tl join sys.objects so with (nolock) on so.object_id = tl.resource_associated_entity_id join sys.dm_tran_database_transactions dt on dt.transaction_id = tl.request_owner_id and tl.resource_database_id = dt.database_id join sys.dm_tran_session_transactions st on st.transaction_id = tl.request_owner_id full outer join sys.dm_exec_requests req on req.session_id = st.session_id outer apply sys.dm_exec_sql_text(req.sql_handle) as sqlT where so.name ='sqL_handLe'

Results of the query while the batch which created the table is still executing (during the waitfor).

Results of the query after the batch has completed - no request so no sql_handle => no SQL text returned. But the lock is still held.

Polling for locks every minute or so and storing results in a table can be much more lightweight than a trace or Extended events. However - not guaranteed to capture the SQL text which was responsible for creating the lock if the transaction spans batches/requests. So that's the trade-off.

A reminder to myself to clean up after myself.

drop table if exists sqL_handLe; commit tran

Wednesday, March 8, 2017

SQL Server 2016 SP1 EE checkdb with physical_only: Trace flags 2549 & 2562

First things first: I don't think trace flag 2549 does anything in SQL Server 2016 SP1 Enterprise Edition.

Last time I said that, I was wrong 😀. I was tricked by trace flag 2549 needing to be enabled globally in order to change behavior - I was testing with it enabled at session level. But... after opening a ticket with Microsoft the kb article was updated to note that T2549 must be enabled globally 😀.

*This* time I made sure to enable T2549 globally. But I didn't see any difference in behavior although all 8 of my database filegroup data files were in directories under mount points of a single drive letter. Checkdb reads were *really* aggressive either way. Like - more aggressive than previously when T2549 did make a difference. My execution times were within 5% of each other. I'll scrutinize the perfmon and session waits more later. But for now, I'm assuming that behavior is the same and expected performance the same with or without T2549.

Now on to the good stuff. As I mentioned, checkdb with physical_only reads are quite aggressive in SQL Server 2016 SP1. The system I tested on is an 8 vcpu vm with 64 GB RAM. The storage is from a hybrid SAN array, although all of the disk devices are SSDs. Aggregate database data footprint - across 8 files in a single filegroup - is 3335GB. (Sum of fileproperty(<name>,'spaceused') across the 8 files).

Let's see some graphs!!!

Look at that read throughput in a vanilla checkdb with physical_only! These perfmon captures are using 1 second intervals - nearly saturating bandwidth for a considerable amount of time during its execution.

This brings up an important point about decisions in performance engineering and planning for systems. If the main goal is completing checkdb as quickly as possible and the 10-12 minutes saved execution time is valuable enough - I'd definitely use T2562 on this system.

But... if there was a concurrent query workload that generated 100 mb/s of read load? I'd strongly consider running checkdb without T2562. Even though it would take a bit longer, there's room in there for additional resource utilization before hitting saturation.

Last time I said that, I was wrong 😀. I was tricked by trace flag 2549 needing to be enabled globally in order to change behavior - I was testing with it enabled at session level. But... after opening a ticket with Microsoft the kb article was updated to note that T2549 must be enabled globally 😀.

*This* time I made sure to enable T2549 globally. But I didn't see any difference in behavior although all 8 of my database filegroup data files were in directories under mount points of a single drive letter. Checkdb reads were *really* aggressive either way. Like - more aggressive than previously when T2549 did make a difference. My execution times were within 5% of each other. I'll scrutinize the perfmon and session waits more later. But for now, I'm assuming that behavior is the same and expected performance the same with or without T2549.

Now on to the good stuff. As I mentioned, checkdb with physical_only reads are quite aggressive in SQL Server 2016 SP1. The system I tested on is an 8 vcpu vm with 64 GB RAM. The storage is from a hybrid SAN array, although all of the disk devices are SSDs. Aggregate database data footprint - across 8 files in a single filegroup - is 3335GB. (Sum of fileproperty(<name>,'spaceused') across the 8 files).

Let's see some graphs!!!

Look at that read throughput in a vanilla checkdb with physical_only! These perfmon captures are using 1 second intervals - nearly saturating bandwidth for a considerable amount of time during its execution.

Hard to see because of the scale, but there's a rise and and fall in memory grant usage corresponding to the "batches" that checkdb is using. Way less than 10 gb of Tempdb is used at peak, for less than 6 minutes out of the roughly 60 minute execution time.

Let's take a look at CPU utilization. Ohhh yeahh! Most of the time there were 16 checkdb parallel threads in use for this 8 vcpu VM. The only break in fairly heavy CPU utilization came during the processing of that large batch in the middle.

OK. Recall that trace flag 2562 instructs checkdb to use a single large "batch" for all of its checking. This can lead to a significant speedup - especially on spinning disk. The cost is more use of tempdb. The next test - checkdb with physical_only + T2562 - demonstrates both the benefit and cost of this behavior.

Hooo doggie!! It only took about 48 minutes and 30 seconds now! Look at that fascinating step function of tempdb use!! Notice how the read bytes/sec plummet briefly before each new step of tempdb use. If only I had enough time to dig into the mechanics of that! Maybe another day...

All right - lets take a look at CPU utilization. CPU also drops at the beginning of each new step of tempdb use. How tantalizing!

But there you have it. Pretty aggressive checkdb with physical_only in SQL Server 2016 SP1. Even a little bit more aggressive with trace flag 2562 enabled. (I enabled it globally for these tests.)

When planning for checkdb with physical_only in SQL Server 2016 SP1, you may want to stick it into its own Resource Governor workload group so you can independently control DOP, maybe tinker with its max memory grant, and maybe even use IOPs governance. I hope to return to those matters sometime soon here. But first gotta also test T2528 (disable parallel checks) and full checkdb.

I should note that these checkdb tests were run in isolation. If there is another concurrent workload on the system, not only will it be effected by checkdb, but that workload will impact checkdb performance. Even moreso if that concurrent workload contains DML (because the internal snapshot for checkdb must be maintained).

*****2017 03 08 addendum *****

I wanted to further detail disk IO activity with graphs. Since the end-point devices are all SSDs, there is less variability in latency and read bytes/sec than would be expected with 10k or 15k fibre channel HDDs.

That's pretty good read throughput, at pretty low latency.

Pretty spikey read IOPs from second to second.

Now let's look at the checkdb run with T2562 enabled. First at read bytes/sec against sec/read service time.

Throughput is even higher - from peaks of 1200 mb/sec to peaks of 1600 mb/sec. That additional throughput comes at a cost though - read service time has risen to about 8 times its previous values.

Lets look at read IOPs vs read service time for the checkdb with T2562.

IOPs have come down even though throughput went up. So the average IO size is higher. But the jump in service time is much higher than predicted by the increase in average read IO size alone.

Rather, saturation of bandwidth must account for some of the increase in service time. In general, at saturation performance behavior is going to deviate from expectation based on non-saturated behavior.

This brings up an important point about decisions in performance engineering and planning for systems. If the main goal is completing checkdb as quickly as possible and the 10-12 minutes saved execution time is valuable enough - I'd definitely use T2562 on this system.

But... if there was a concurrent query workload that generated 100 mb/s of read load? I'd strongly consider running checkdb without T2562. Even though it would take a bit longer, there's room in there for additional resource utilization before hitting saturation.

Anyway... ciao for now!

Friday, March 3, 2017

SQL Server 2016 Spinlocks: Query Store

Recently on #sqlhelp there was an instance of a 1 GB Query Store being nearly full, and the instance slowing to a crawl. It was difficult to even run diagnostic queries, and to resolve the service was restarted and Query Store set to read only (so all contents were retained).

Hard to say if the problem was work or wait. Certainly both could have contributed. But most diagnostic queries are pretty light - unless CPU is saturated or there are long waits to compile or get a memory grant, they'll usually run even if a bit sluggish.

So I suspect that this was a work problem. Eg with the Query Store nearly full, management work became so expensive that user query work was starved for CPU time. That doesn't seem far-fetched because the number of queries in the 1 GB query store was over 100,000 if I recall correctly.

There have been issues in the past with the plan cache when it was at or near plan count capacity - it would become a one-in-one-out scenario, with the session that wants to insert a new plan being responsible for the necessary cleanup. If the hash buckets were really long it became expensive to walk them.

I'm not sure how size-based cleanup is implemented for the Query Store, other than seeing documented that it kicks in near 80% of configured limit. If sessions waiting to compile a query take on responsibility of cleaning out stuff from Query Store, there could be a situation very similar to the plan cache conundrum of old.

If that's happening and CPU utilization is near 100% either server-wide or on a subset of cores, then spinlock stats may become more enlightening than wait stats.

I did see a number of new spinlocks added for Query Store...

QUERY_STORE_ASYNC_PERSIST

QUERY_STORE_CAPTURE_POLICY_INTERVAL

QUERY_STORE_CAPTURE_POLICY_STATS

QUERY_STORE_HT_CACHE

QUERY_STORE_PLAN_COMP_AGG

QUERY_STORE_PLAN_LIST

QUERY_STORE_SELF_AGG

QUERY_STORE_STMT_COMP_AGG

SPL_QUERY_STORE_EXEC_STATS_AGG

SPL_QUERY_STORE_EXEC_STATS_READ

SPL_QUERY_STORE_STATS_COOKIE_CACHE

Hard to say if the problem was work or wait. Certainly both could have contributed. But most diagnostic queries are pretty light - unless CPU is saturated or there are long waits to compile or get a memory grant, they'll usually run even if a bit sluggish.

So I suspect that this was a work problem. Eg with the Query Store nearly full, management work became so expensive that user query work was starved for CPU time. That doesn't seem far-fetched because the number of queries in the 1 GB query store was over 100,000 if I recall correctly.

There have been issues in the past with the plan cache when it was at or near plan count capacity - it would become a one-in-one-out scenario, with the session that wants to insert a new plan being responsible for the necessary cleanup. If the hash buckets were really long it became expensive to walk them.

I'm not sure how size-based cleanup is implemented for the Query Store, other than seeing documented that it kicks in near 80% of configured limit. If sessions waiting to compile a query take on responsibility of cleaning out stuff from Query Store, there could be a situation very similar to the plan cache conundrum of old.

If that's happening and CPU utilization is near 100% either server-wide or on a subset of cores, then spinlock stats may become more enlightening than wait stats.

I did see a number of new spinlocks added for Query Store...

SELECT name FROM sys.dm_os_spinlock_stats WHERE name LIKE '%QUERY_STORE%' ORDER BY name

QUERY_STORE_ASYNC_PERSIST

QUERY_STORE_CAPTURE_POLICY_INTERVAL

QUERY_STORE_CAPTURE_POLICY_STATS

QUERY_STORE_HT_CACHE

QUERY_STORE_PLAN_COMP_AGG

QUERY_STORE_PLAN_LIST

QUERY_STORE_SELF_AGG

QUERY_STORE_STMT_COMP_AGG

SPL_QUERY_STORE_EXEC_STATS_AGG

SPL_QUERY_STORE_EXEC_STATS_READ

SPL_QUERY_STORE_STATS_COOKIE_CACHE

CREATE INDEX sort_in_tempdb - test model for sort/hash spill stress?

These results are from a 4 vcpu VMware VM. The test is an offline CREATE INDEX, at MAXDOP 4. This vm - running SQL Server 2014 with trace flags 1117 and 1118 globally enabled - has two equally sized tempdb files, each on their own Windows volume in the guest (no other files in those NTFS filesystems). The tempdb Windows volumes, like the other volumes holding persistent database files or transaction logs (including the tempdb transaction log) are distributed among 3 pvscsi vHBAs. But the underlying storage is by no means supercharged here. This system isn't a full performance-at-scale testing rig. More like dev & exploration 😁

The 4 parallel threads (the dark blue area) are keeping the vcpu at 100% cpu busy (the red dots). The CREATE INDEX is the only active request in the default workload group (the really light blue dots), and there are no other workload groups except the internal group. Perfmon was collecting metrics in 1 second intervals. Notice that it caught a task waiting for a page latch for a few seconds!! (That's the light blue dashed line.) But - that waiter was at a time of reported 100% vcpu utilization. Any optimization that removed that wait with no other changes would simply see the time replaced with signal wait or sos_suspend_queue time.

Well... how about query memory and tempdb usage? The query received and used a memory grant of almost 4 GB, which it used in a step function manner as seen in red dotted line below. The one second perfmon collection interval really shows the microburst nature of this tempdb activity. Those one second burst are up to 500 mb/sec. That type of write throughput can be really punishing to shared storage.

Let's take a look at the tempdb footprint during this CREATE INDEX. The bursts of writes leads to pretty steady tempdb footprint growth throughout the activity.

Let's take a look at writes per second to these two Windows volumes, rather than write bytes. The shape of the area graph is identical - only the unit of measure has changed. Throughout the operation the writes are 64kb extents. Since this CREATE INDEX is running in isolation, the writes and write bytes are divided almost exactly between the two files. (Although its kind of hard to tell in these graphs - next time I'll have to grab virtual file stats before and after, too, to show the breakdown.)

OK, one last graph I'll share from this experiment: vCPU utilization vs write bytes. With more vcpus and a higher DOP for the operation (or two concurrent operations at DOP 4) I'd expect more work to be done per unit of time. And for the write rate to increase accordingly. I kind of cheated because before I ran this test I looked at some of my old 1 second captures from an 8 vcpu system on a monster server :-) That test was with a varied workload of complex, long-running SQL queries at query concurrency 16 (enforced by Resource Governor active request limit). That system did get peaks of over 1.2 GB/s of writes to its two tempdb files, though. 😊

Now, my working assumption is that sort_in_tempdb has the same potential allocation page bottleneck as sort and hash spill, and ultimately the same as the allocation page contention that we know and love from systems with session counts many times the (v)cpu count putting pressure on lots of temp tables with create/destroy/populate.

But on these systems the target for query concurrency is typically 2 to 3 times vcpu count, with a MAXDOP no higher than 8. Of the queries running, only 2 to 4 are expected to spill concurrently at any given time. There is no significant contending concurrent use of #temp or ##globaltemp tables among the concurrent queries.

I believe that type of workload (where spill is the main consideration) has a very different profile from the typical use cases that drive count of tempdb files based on (v)CPU count. For a number of reasons, I think on these systems even up to 32 physical cores 2 or 4 tempdb data files(each on their own Windows volume or co-located if onboard SSD/flash is used for tempdb) will be better than 8 or more. Hopefully I'll articulately explain why later...

For now its off to check on resources for a larger scale run of this test - with single command at high DOP and mulitple concurrent commands at lower DOP to see how the waiters, CPU utilization, and write activity play out.

The 4 parallel threads (the dark blue area) are keeping the vcpu at 100% cpu busy (the red dots). The CREATE INDEX is the only active request in the default workload group (the really light blue dots), and there are no other workload groups except the internal group. Perfmon was collecting metrics in 1 second intervals. Notice that it caught a task waiting for a page latch for a few seconds!! (That's the light blue dashed line.) But - that waiter was at a time of reported 100% vcpu utilization. Any optimization that removed that wait with no other changes would simply see the time replaced with signal wait or sos_suspend_queue time.

Well... how about query memory and tempdb usage? The query received and used a memory grant of almost 4 GB, which it used in a step function manner as seen in red dotted line below. The one second perfmon collection interval really shows the microburst nature of this tempdb activity. Those one second burst are up to 500 mb/sec. That type of write throughput can be really punishing to shared storage.

Let's take a look at the tempdb footprint during this CREATE INDEX. The bursts of writes leads to pretty steady tempdb footprint growth throughout the activity.

Let's take a look at writes per second to these two Windows volumes, rather than write bytes. The shape of the area graph is identical - only the unit of measure has changed. Throughout the operation the writes are 64kb extents. Since this CREATE INDEX is running in isolation, the writes and write bytes are divided almost exactly between the two files. (Although its kind of hard to tell in these graphs - next time I'll have to grab virtual file stats before and after, too, to show the breakdown.)

OK, one last graph I'll share from this experiment: vCPU utilization vs write bytes. With more vcpus and a higher DOP for the operation (or two concurrent operations at DOP 4) I'd expect more work to be done per unit of time. And for the write rate to increase accordingly. I kind of cheated because before I ran this test I looked at some of my old 1 second captures from an 8 vcpu system on a monster server :-) That test was with a varied workload of complex, long-running SQL queries at query concurrency 16 (enforced by Resource Governor active request limit). That system did get peaks of over 1.2 GB/s of writes to its two tempdb files, though. 😊

Now, my working assumption is that sort_in_tempdb has the same potential allocation page bottleneck as sort and hash spill, and ultimately the same as the allocation page contention that we know and love from systems with session counts many times the (v)cpu count putting pressure on lots of temp tables with create/destroy/populate.

But on these systems the target for query concurrency is typically 2 to 3 times vcpu count, with a MAXDOP no higher than 8. Of the queries running, only 2 to 4 are expected to spill concurrently at any given time. There is no significant contending concurrent use of #temp or ##globaltemp tables among the concurrent queries.

I believe that type of workload (where spill is the main consideration) has a very different profile from the typical use cases that drive count of tempdb files based on (v)CPU count. For a number of reasons, I think on these systems even up to 32 physical cores 2 or 4 tempdb data files(each on their own Windows volume or co-located if onboard SSD/flash is used for tempdb) will be better than 8 or more. Hopefully I'll articulately explain why later...

For now its off to check on resources for a larger scale run of this test - with single command at high DOP and mulitple concurrent commands at lower DOP to see how the waiters, CPU utilization, and write activity play out.

Subscribe to:

Posts (Atom)